A team of international researchers recently taught AI to justify its reasoning and point to evidence when it makes a decision. The ‘black box’ is becoming transparent, and that’s a big deal.

Figuring out why a neural network makes the decisions it does is one of the biggest concerns in the field of artificial intelligence. The black box problem, as it’s called, essentially keeps us from trusting AI systems.

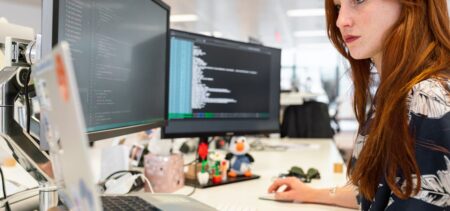

The team was comprised of researchers from UC Berkeley, University of Amsterdam, MPI for Informatics, and Facebook AI Research. The new research builds on the group’s previous work, but this time around they’ve taught the AI some new tricks.