High‑end GPUs remained scarce, queue times stretched from hours to days, and model teams learned the hard way that single‑cloud loyalty often delayed launches more than it protected them from complexity. The case for cross‑cloud was not philosophical; it was practical—get access to capacity, shorten feedback loops, and keep latency in check without gutting pipelines. Against that backdrop, CoreWeave’s alignment with Google Cloud aimed to make “multi‑cloud by default” feel less like a juggling act and more like a coherent system. The announcement introduced three planks—CoreWeave Interconnect, SUNK Anywhere, and LOTA Cross‑Cloud—plus new integrations with Weights & Biases on Google Cloud and commercial policies that sought to neutralize data‑movement penalties. Early adopters such as Noble Machines and PathAI surfaced a simple motivator: run training and inference where it makes sense today, not where quotas force decisions.

Building a Unified Fabric: Network, Compute, and Data

The networking pillar targeted the mess that usually sits between clouds: private circuits, third‑party gear, and opaque security models. CoreWeave Interconnect used Google Cloud’s Partner Cross‑Cloud Interconnect to present a private, fiber‑based path with predictable performance and built‑in MACsec. That meant packets stayed on a hardened backbone rather than hopping public segments, exchanging DIY tunneling for a managed link that aimed at low latency and high reliability. Customers did not need to add extra networking vendors or deploy new appliances, which trimmed both procurement cycles and operational risk. Interconnect entered private preview in select regions with flexible bandwidth options, aligning with a carrier‑grade backbone that spanned 44 data centers. The goal was to move past “best effort” and make cross‑cloud traffic feel like the same LAN.

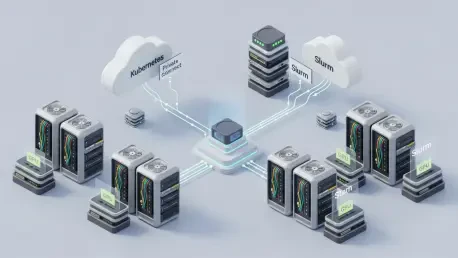

Compute and orchestration formed the second plank, meeting teams where they already operated. SUNK Anywhere extended CoreWeave’s hybrid stack—Kubernetes plus Slurm—across heterogeneous GPU pools spanning CoreWeave, Google Cloud, AWS, Azure, and on‑prem clusters. Instead of rebuilding training code for each environment, operators could preserve job submission patterns, checkpoint logic, and autoscaling policies while bursting to the capacity that cleared bottlenecks. Slurm‑aware schedulers managed multi‑node distribution, while Kubernetes primitives handled services and agents running sidecar tools. This approach mattered when quota ceilings or preemption disrupted single‑cloud plans; migration overhead often killed iteration speed. By keeping orchestration consistent, teams could push transformer fine‑tunes to where #00s were available, land latency‑sensitive inference closer to users, and keep the MLOps backbone unchanged.

Operational Reality: Data Locality, Economics, and Tooling

Data locality typically determined whether cross‑cloud training was theory or throughput. LOTA Cross‑Cloud extended CoreWeave’s Local Object Transport Accelerator to external providers, caching hot shards near GPU nodes no matter where the job landed. Pairing that cache with CoreWeave AI Object Storage created a pattern: store primary datasets in an AI‑tuned bucket, then hydrate cores with high‑throughput reads as training advanced through epochs. This reduced tail latency for small files, sustained multi‑GB/s aggregate rates during shuffles, and kept restart times tolerable when resuming from checkpoints. The result was predictable access semantics across providers, a critical factor for multi‑node runs that otherwise stalled on stragglers. Integrity and durability still anchored the storage layer, but LOTA handled placement so that operators focused on models, not plumbing.

Economics and observability closed the loop. The Zero Egress Migration program paid egress fees to move datasets from major clouds into CoreWeave through a managed, secure transfer, cutting a frequent blocker to rebalancing storage. Once data resided in CoreWeave AI Object Storage, there were no CoreWeave egress fees—even when LOTA Cross‑Cloud served that data to jobs running on other clouds. On the monitoring front, new W&B integrations on Google Cloud gave agent teams a common pane for reliability metrics, rollout analysis, and fast iteration. Noble Machines and PathAI adopted these pieces to keep experiments flowing while spreading compute across providers. For practitioners considering next steps, a pragmatic path had emerged: validate latency with Interconnect in preview regions, map orchestration to SUNK Anywhere without touching model code, anchor primary datasets in AI Object Storage, then use LOTA to localize hot data near GPUs. This playbook favored steady wins—measured latency, predictable throughput, and less toil—over vendor lock‑in, and it pointed toward cross‑cloud that operated as a system rather than a patchwork.