Budget pressure, AI ambition, and the refusal to replatform all at once converge to make enterprises ask for one operating model that modernizes delivery, contains cost, and keeps core systems available without risky big-bang moves. Hyve’s collaboration with Red Hat targets exactly that demand with a fully managed OpenShift platform that runs containers and virtual machines together under a single control plane.

This analysis examines how that design reshapes migration paths, spend patterns, and AI readiness. By aligning operations across workload types and shifting licensing to physical resources, the offer positions modernization as a sequence of low-risk steps rather than a cliff jump.

The lens here is pragmatic: continuity with modernization, predictable economics, and operational discipline at scale. The thesis is that a managed OpenShift baseline creates the runway to move AI pilots into production while preserving critical VM-based services.

From Virtualization to Cloud-Native: The Context Behind the Shift

Over the last decade, container-first patterns promised faster delivery, higher density, and repeatable environments. Yet most estates still depend on VM-bound databases, commercial apps, and line-of-business systems that resist rapid refactoring, leaving teams straddling two stacks and duplicating effort.

Kubernetes became the de facto control plane, with OpenShift layering policy, automation, and hybrid consistency. Meanwhile, per-workload licensing inflated costs as microservices multiplied and environments spanned on-prem and cloud, complicating chargeback and budget forecasts.

AI pressures raised the stakes: GPU-aware scheduling, reproducible pipelines, and cross-environment governance are mandatory, not optional. Against this backdrop, a managed OpenShift that supports both VMs and containers offers a bridge—reducing transition friction while preparing for AI operations.

Unifying Operations and Economics on OpenShift

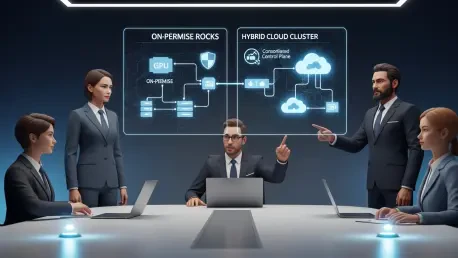

Operational Convergence: One Control Plane for VMs and Containers

Running VMs and containers side by side on OpenShift changes workflow gravity. Teams can migrate incrementally—containerizing when ready—while keeping legacy apps stable, shrinking tool sprawl and policy duplication across platforms.

Centralized networking, observability, and security improve release cadence and governance, and shared pipelines cut rollback confusion. There are caveats: VM tuning, stateful services, and GPU drivers need careful handling, but Hyve’s managed runbooks and blueprints are designed to de-risk those edges.

Cost Predictability: Resource-Based Licensing vs. Per-Workload Models

Shifting licensing to physical server resources stabilizes spend as estates grow, avoiding penalties for microservice proliferation or VM sprawl. This aligns with utilization-driven planning and enables clearer forecasting and chargeback across business units.

Trade-offs remain: capacity discipline is essential to prevent idle hardware, and GPU node rightsizing is a must. Combining autoscaling for elasticity with reserved capacity for critical AI paths helps balance performance guarantees with budget control.

AI Readiness and Hybrid Consistency: From Pilot to Production

OpenShift’s consistent substrate across private and hybrid environments standardizes MLOps, GPU scheduling, and policy enforcement. That uniformity lowers friction when promoting models from lab runs to production SLAs.

Regional needs matter. Data sovereignty in the EU and APAC, egress costs, and uneven GPU supply influence placement. Hyve’s planned Australian office signals demand for local compliance alignment and low-latency support, countering common myths that AI demands a wholesale replatform.

What’s Next: Trends That Could Reshape the Stack

Expect tighter coupling of AI toolchains via OpenShift Operators, stronger support for GPUs and DPUs, and policy-as-code spanning data, models, and runtimes. GitOps is set to extend from app delivery to full-stack automation across infra and ML pipelines.

Economics and regulation will reinforce managed platforms as talent gaps persist and rules on data residency, model transparency, and supply chain security intensify. The likely endpoint is blended estates where legacy VMs, microservices, and AI inference run on a unified mesh tuned for performance per dollar.

Practical Takeaways and Recommendations

Start with discovery: classify VM workloads, map data gravity, and size GPU demand to stage migrations without service disruption. Early wins anchor momentum and trust.

Adopt resource-based planning with autoscaling and quotas, pair it with chargeback, and rightsize nodes for CPU, memory, and accelerators. Standardize security via RBAC, network policy, and image provenance, and lean on Hyve’s managed SRE to reduce toil.

Pilot AI near existing data and identity systems, measure with realistic loads, and validate cost-performance before scaling. Track deployment frequency, failure rates, utilization, and unit economics to refine placement and licensing footprints over time.

Bringing It Together: Pragmatism Over Hype

The Hyve-Red Hat offer blends modernization with continuity: one OpenShift platform for VMs and containers, resource-based licensing for predictable spend, and a hybrid foundation that is ready for production-grade AI. Its value rests on fewer moving parts and clearer economics.

The strategic takeaway is simple and actionable: unify first to cut complexity, then optimize for performance per dollar, and scale AI where consistency and governance already exist. This path keeps today’s services steady while unlocking tomorrow’s gains.