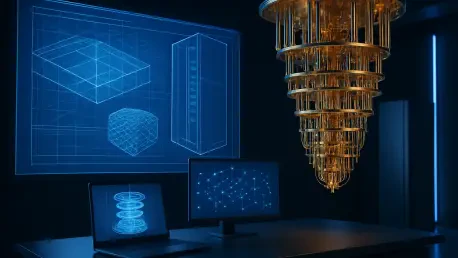

The relentless pursuit of computational power has finally moved beyond the constraints of classical bits, ushering in an architectural framework where quantum and classical systems operate as a single, cohesive entity. IBM has introduced the industry’s first published reference architecture for quantum-centric supercomputing, creating a standardized map for the next generation of discovery. This framework resolves the historical friction between Quantum Processing Units and traditional high-performance components like CPUs and GPUs.

By formalizing these interactions, the architecture provides a reliable foundation for executing workloads that remain unsolvable for even the most advanced classical clusters. The focus shifts away from viewing quantum hardware as a standalone curiosity and toward treating it as a specialized accelerator within a broader ecosystem. This synergy allows for the management of massive data sets and complex calculations in a unified environment, ensuring that scientific endeavors can scale without needing bespoke, incompatible hardware configurations.

Contextual Background and the Evolution of Quantum Research

Decades ago, Richard Feynman proposed that simulating the intricacies of nature would require a machine built on the principles of quantum mechanics itself. For a long time, this vision remained a laboratory aspiration, confined to experimental setups and isolated research silos. The emergence of this new blueprint marks the formal transition from these early experiments to a functional computational reality that integrates cloud-based and on-premises environments seamlessly.

A unified blueprint is now essential for the global scientific community as it addresses urgent challenges in chemistry and materials science. By moving away from fragmented hardware, researchers can rely on an integrated infrastructure that supports consistent results across different platforms. This evolution reflects a growing maturity in the field, turning theoretical potential into a structured utility for high-performance computing that meets modern industry demands.

Research Methodology, Findings, and Implications

Methodology

The methodology involves the strategic use of open software frameworks, specifically Qiskit, to orchestrate workflows across heterogeneous systems. This software acts as a bridge, coordinating tasks between the quantum processors and the classical machines that support them. By utilizing a modular design, the architecture allows for the flexible integration of various hardware components without disrupting the overall system logic.

Furthermore, the design incorporates high-speed networking and shared classical storage to minimize latency during data exchange. This hardware-agnostic approach ensures that the blueprint remains relevant even as individual components are upgraded or replaced. The goal is to maintain a seamless flow of information, allowing the quantum units to function as true accelerators within a traditional supercomputing cluster.

Findings

The research successfully established a paradigm where quantum and classical resources exist within a single, harmonious ecosystem. It was discovered that specific computational bottlenecks, particularly those involving molecular simulations and optimization problems, are significantly alleviated when offloaded to quantum processors. This evidence suggests that the hybrid model is not only feasible but essential for future performance gains.

A critical finding is the proof of scalability inherent in this living blueprint. As both algorithms and physical hardware evolve, the architecture demonstrates an ability to adapt without requiring a total redesign of the underlying infrastructure. This flexibility confirms that the path toward large-scale quantum utility is achievable through iterative, standardized integration that accommodates growth in both speed and complexity.

Implications

The implications of this standardized stack are profound, as it significantly lowers the barrier to entry for various industries. Organizations that previously lacked the expertise to build quantum workflows from scratch can now leverage a proven framework to apply quantum power to practical experiments. This democratization of technology accelerates the pace of innovation across a wide range of scientific and commercial disciplines.

Moreover, the adoption of a common reference architecture encourages a more collaborative global ecosystem. By aligning different partners and clients around a shared set of technical standards, the industry can focus on refining algorithms rather than troubleshooting integration issues. This shift toward standardization signals the beginning of a more industrial and professional approach to quantum-classical hybrid computing.

Reflection and Future Directions

Reflection

Looking back, the primary challenge was harmonizing two fundamentally different computing modalities into a single workflow. The shift from theoretical exploration to practical utility required deep technical integration that addressed synchronization and data movement bottlenecks. Open-source software proved to be the vital link in this process, connecting the specialized needs of hardware manufacturers with the functional demands of end-users.

The success of this integration highlighted the importance of moving beyond the hardware itself to consider the entire software and networking stack. It became clear that the value of a quantum processor is only fully realized when it is supported by a robust classical infrastructure. This reflection emphasizes that the future of computing lies in the intelligent combination of diverse processing strengths rather than a single technology.

Future Directions

Future efforts will likely focus on more advanced error-correction methods to increase the reliability and duration of quantum computations. Researchers are also investigating ways to further miniaturize and optimize the interface between quantum units and classical hardware to reduce operational overhead. These improvements will be critical as the scale and complexity of the workloads continue to grow over the coming years.

Additionally, there is significant potential in expanding the reference architecture to include specialized artificial intelligence accelerators alongside traditional GPUs. As AI models become more complex, integrating them with quantum processors could unlock new levels of efficiency in data processing and pattern recognition. This expanded scope would ensure that the supercomputing environment remains at the absolute cutting edge of technological capability.

Conclusion: A New Era of Scientific Discovery

The introduction of the quantum-centric blueprint fundamentally transformed the landscape of high-performance computing by providing a clear path toward a hybrid future. This milestone demonstrated that quantum technology was no longer an isolated field but a cornerstone of modern scientific infrastructure. Industrial leaders began to adopt these standardized workflows, realizing the practical benefits of offloading complex calculations to specialized processors.

The focus shifted toward refining the interoperability of these systems to tackle even more ambitious global challenges in medicine and energy. By establishing a scalable and modular foundation, the architecture ensured that the scientific community remained prepared for the next wave of hardware breakthroughs. This transition solidified the role of quantum-centric systems as the primary engine for discovery and technological advancement.