The gap between a successful laboratory experiment and a resilient, revenue-generating enterprise application is often wider than many technology leaders initially anticipate when launching their first neural networks. While a prototype might impress a small group of internal stakeholders with its clever responses, the requirements for a system that serves millions of global users are fundamentally different. Organizations have quickly learned that the “pilot” phase is merely a playground for validating use cases, whereas the transition to production requires a rigorous focus on architecture, scalability, and long-term maintenance. In the current landscape of 2026, the industry is witnessing a massive migration where the emphasis is shifting from the novelty of the model itself to the robustness of the infrastructure supporting it. This shift is particularly evident in how companies manage unstructured data, moving away from makeshift solutions toward specialized vector databases that can handle the intensity of real-time retrieval.

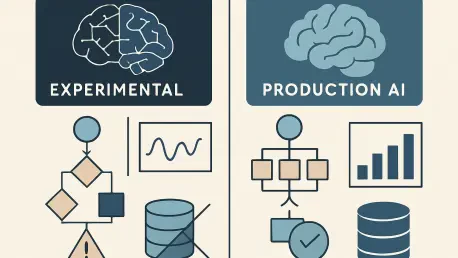

The infrastructure facilitating this transition serves as the backbone for Retrieval-Augmented Generation (RAG) and autonomous agents. Platforms like Qdrant, Pinecone, and Weaviate have become essential for organizations that need to store and retrieve complex data embeddings with high precision. While legacy cloud providers and database giants like AWS, Oracle, and Databricks have integrated vector search features into their existing suites, specialized services such as Qdrant Cloud are carving out a distinct niche. These specialists focus on bridging the “production gap” by offering high-performance tools specifically engineered for the heavy lifting required in enterprise workloads. The choice between an experimental setup and a production-grade environment often determines whether an AI initiative will provide a genuine competitive advantage or simply become a costly technical liability.

Transitioning from Prototypes to Enterprise-Grade Systems

Experimental AI functions as the vital testing ground where developers can explore the boundaries of machine learning without the pressure of immediate operational risks. During this phase, the primary objectives are building prototypes, testing basic capabilities, and ensuring that a specific use case is even feasible. These environments are typically controlled and small-scale, allowing for rapid iteration and frequent failures. In these isolated settings, a developer might use a basic open-source model and a small dataset to prove a concept. Because the stakes are low, the focus remains almost entirely on the output of the model rather than the plumbing that keeps the system running.

In contrast, Production AI involves the full-scale integration of these technologies into the core machinery of business operations. Here, the priorities shift dramatically toward reliability, governed access, and the ability to scale on demand. When an AI agent is responsible for customer-facing interactions or financial decision-making, there is no room for the unpredictability that characterizes the experimental phase. Production systems must interact seamlessly with existing data architectures, such as the Databricks lakehouse or a Snowflake warehouse, ensuring that the AI has access to the right data at the right time. This transition requires a move away from “black box” experimentation toward a structured operational framework that prioritizes consistent performance over mere novelty.

Specialized platforms like Qdrant have become instrumental in this evolutionary process by providing a bridge between these two worlds. While initial experiments might suffice on general-purpose hardware, the rigorous demands of real-world enterprise workloads require tools designed for high-concurrency and low-latency environments. Industry analysts, including Devin Pratt from IDC, suggest that the success of these deployments often hinges on how well the specialized AI tools integrate with the broader data stack. As enterprises move beyond the honeymoon phase of AI discovery, the focus is increasingly on building systems that are not just smart, but also durable and easy to manage within a complex corporate ecosystem.

Evaluating Key Performance and Operational Metrics

Infrastructure and Indexing Speed

A defining characteristic of the experimental phase is the reliance on Central Processing Units (CPUs) for data processing. For small-scale projects involving limited datasets, CPUs provide a cost-effective and accessible way to build initial indexes and run basic queries. However, as the volume of data grows, this approach quickly reveals its limitations. CPU-only indexing creates a significant bottleneck, leading to long wait times and an inability to keep up with the influx of new information. In an experimental setting, a delay in data processing might be a minor inconvenience, but in a live environment, it can render an AI application useless.

Production AI demands a shift toward GPU-accelerated indexing to maintain the necessary speed and efficiency. Qdrant Cloud has recently integrated GPU support to address the massive complexity of vector embeddings, allowing for the rapid construction of indexes that would take hours or days on traditional hardware. This hardware acceleration is critical for maintaining “data freshness,” a term that refers to the system’s ability to reflect the most current information available. If an AI agent is retrieving outdated data because the indexing process is lagging, the quality of its output diminishes. High-performance indexing ensures that the retrieval process remains fast even as the dataset expands to billions of vectors.

The difference in latency between these two stages can be the difference between a helpful assistant and a frustrating user experience. Experimental setups often struggle with retrieval times that fluctuate wildly based on system load. Production-grade systems, however, utilize optimized kernels and specialized hardware to ensure that every query is answered in milliseconds. This level of responsiveness is mandatory for agentic applications that must perform multiple retrieval steps in a single workflow. By moving the heavy computational work to GPUs, organizations can ensure that their AI remains nimble and accurate, regardless of the scale of the operation.

Reliability and System Availability

The tolerance for downtime is perhaps the most significant cultural difference between experimental and production teams. In a pilot project, if a server goes offline for an hour, the development team simply restarts it and continues their work. Experimental AI is often hosted on single-node setups or basic cloud instances that lack sophisticated failover mechanisms. This “best-effort” approach to availability is acceptable when the only users are internal developers, but it is entirely insufficient for applications that support mission-critical business functions.

Production AI environments require “always-on” availability, a standard that is typically met through the use of Multi-AZ (Availability Zone) clusters. By replicating data across three distinct zones, platforms like Qdrant ensure that an application remains operational even if an entire data center experiences a failure. This architectural redundancy allows for automatic failover, meaning the system can switch to a healthy node without any manual intervention. This level of sophistication ensures that the AI service stays online, meeting the strict uptime guarantees and service level agreements (SLAs) required by modern enterprises.

Beyond simple uptime, reliability in production also encompasses the consistency of the data itself. In experimental setups, data corruption or minor inconsistencies might be overlooked, but in production, data integrity is paramount. Multi-zone replication not only protects against hardware failure but also ensures that the data being queried is consistent across the entire cluster. This architectural maturity is what allows companies to confidently deploy AI in high-stakes environments, such as medical diagnostics or automated trading, where a system failure or data error could have catastrophic consequences.

Governance and Regulatory Compliance

During the early stages of AI experimentation, the primary goal is often to “make it work,” which frequently leads to a neglect of governance and security protocols. Developers might use sensitive data without proper masking or fail to document who is accessing specific models. While this lack of oversight speeds up the initial development process, it creates significant risks that must be addressed before the system can move to production. Experimental AI is characterized by this “move fast and break things” mentality, which is increasingly at odds with the demands of the modern regulatory landscape.

Production AI, particularly in sectors like finance and healthcare, necessitates a rigorous approach to transparency and auditability. One of the most critical features in a production-ready system is robust audit logging. By capturing every API operation, organizations can create a detailed trail of how data was accessed, who manipulated it, and when these actions occurred. This capability is essential for compliance officers who must ensure that the AI operates within legal and ethical boundaries. Systems like Qdrant Cloud have introduced these logging features specifically to satisfy the needs of enterprise security teams who require full visibility into their AI infrastructure.

Furthermore, production systems prioritize explainable workflows and secure data handling over raw model performance. While an experimental model might be praised for its creative output, a production model is valued for its predictability and its adherence to internal governance policies. This involves implementing fine-grained access controls and ensuring that the data used for retrieval is handled with the same level of security as any other corporate asset. As the regulatory environment becomes more complex, the ability to prove that an AI system is secure and compliant becomes a non-negotiable requirement for any enterprise deployment.

Challenges and Constraints in Scaling AI Solutions

Moving from a prototype to a production environment introduces technical hurdles that are rarely encountered in the lab. One of the most prominent issues is the concept of “Retrieval Breadth.” Kevin Petrie, an analyst at BARC U.S., has pointed out that relying solely on vector similarity is often insufficient for the nuanced queries found in enterprise settings. While vector search is excellent at finding conceptually similar items, it can struggle with specific keyword matches or structured data filters. To overcome this, production systems must integrate a “hybrid” approach, combining vector search with traditional keyword matching and structured SQL queries into a unified framework.

Another significant constraint is the complexity of ecosystem integration. A specialized vector database does not exist in a vacuum; it must play well with the rest of the enterprise data stack. For many organizations, this means ensuring that Qdrant or Pinecone can easily ingest data from a Databricks lakehouse or a Snowflake warehouse without creating massive amounts of manual work for data engineers. The goal is to create a seamless pipeline where data flows from the source to the AI model with minimal friction. Without this level of integration, the AI system becomes a siloed “data island” that is difficult to maintain and even harder to keep updated with the latest business information.

Finally, enterprises must grapple with the growing burden of technical debt associated with maintaining complex AI infrastructure. Managing GPU clusters, optimizing indexes, and ensuring high availability requires a specialized skill set that is in short supply. This has led many organizations to favor managed services that offer “operational simplicity.” By offloading the maintenance of the underlying plumbing to a specialized provider, companies can focus their resources on building the actual AI applications that drive business value. The challenge lies in finding the right balance between the extreme performance of a specialized tool and the convenience of a general-purpose platform.

Strategic Recommendations for Production Readiness

The shift from experimentation to production necessitates a fundamental reassessment of an organization’s technology stack. It is no longer enough to choose a tool based on how easy it is to set up; the decision must be based on how well that tool will perform under the pressure of a thousand concurrent users and a billion-row dataset. For companies aiming for high-performance outcomes, specialized vector databases that offer GPU-accelerated processing are the preferred choice. These platforms ensure that as the scale of the data increases, the speed of retrieval does not suffer, keeping the AI responsive and effective.

Reliability must be treated as a first-class citizen during the planning phase. Rather than waiting for a system failure to happen, enterprises should prioritize platforms that support Multi-AZ clusters and provide high-uptime guarantees from the start. This proactive approach to architecture reduces the risk of costly downtime and ensures that the AI application can support mission-critical workflows without interruption. Additionally, for those operating in regulated industries, selecting a solution with built-in audit logging and transparent data handling is essential for streamlining the compliance process and satisfying internal security requirements.

The transition from building AI to running AI was marked by a newfound respect for the complexities of real-world deployment. Organizations that succeeded in this transition were those that looked beyond the initial excitement of generative models and invested heavily in the boring but essential aspects of infrastructure. They realized that while a CPU-based prototype was a great starting point, a GPU-accelerated, multi-zone production system was what actually delivered business results. By addressing the practical hurdles of speed, reliability, and governance, these enterprises bridged the production gap and turned their AI experiments into durable, high-value assets.