The modern software developer often finds themselves navigating a treacherous landscape where the exhilarating speed of large language models masks a profound and growing deficit in systemic predictability. While the ability to conjure complex functions from a single natural language prompt felt like magic only a few years ago, the novelty has been replaced by a grim realization. Large Language Models (LLMs) currently operate on a single-pass fallacy, attempting to move from messy human intent to rigid machine code in one giant, probabilistic leap. This approach inherently lacks the guardrails required for the heavy lifting of professional engineering, leaving teams to grapple with code that looks right but fails in the subtle, high-stakes edge cases that define production stability.

The industry now stands at a crossroads where “mostly correct” has become a liability rather than an asset. For a startup building a prototype, a hallucinated null check is a minor annoyance, but for a financial institution or a healthcare provider, it represents a catastrophic failure. Moving beyond this stochastic crisis requires a fundamental shift in how developers utilize artificial intelligence. Instead of treating the AI as a standalone author of final code, the focus is shifting toward architectural frameworks that reintroduce engineering rigor. By separating the creative reasoning of the AI from the final synthesis of the syntax, the industry is rediscovering a path toward functional reliability.

The Stochastic Crisis in Software Development

A paradox defines the current state of software engineering: developers are writing code faster than ever before, yet the predictability of the resulting systems is at an all-time low. This tension arises from the fundamental mismatch between the way humans think and the way LLMs generate text. In a traditional workflow, every line of code was the result of a deliberate, deterministic choice made by a human who understood the underlying logic. Today, the process is increasingly probabilistic, where a model predicts the next token based on statistical patterns rather than logical necessity. This shift has created a “Single-Pass” fallacy, where the industry expects a model to get everything right—logic, syntax, and security—in a single emission.

Relying on raw AI output for enterprise-grade systems is a gamble that few organizations can afford to take long-term. When an AI generates a snippet of code, it does not “know” if the code works; it only knows that the code looks like other code it has seen. This lack of inherent understanding leads to structural errors that are often difficult to spot during a cursory review. For enterprise applications, the cost of a “hallucination”—such as an AI inventing a library method or omitting a critical security check—is far higher than the time saved by the initial generation. True engineering rigor requires a system that prioritizes stability over mere speed, ensuring that the generated output is not just plausible, but provably correct.

Why Deterministic Engineering Is Breaking Under AI

The transition from deterministic logic to probabilistic outcomes has fundamentally altered the relationship between a developer and their tools. Historically, software development was a series of if-this-then-that propositions where the behavior of a system was entirely explainable through its source code. Now, the introduction of AI-generated snippets introduces a “Hallucination Barrier” that more parameters and larger training sets cannot seem to break. No matter how sophisticated a model becomes, it remains a statistical engine, meaning it will always have a non-zero probability of generating nonsense. This inherent uncertainty is incompatible with the rigid requirements of production-level code.

Real-world risks of raw AI output are becoming increasingly visible in the form of subtle security vulnerabilities and maintenance nightmares. An LLM might successfully generate a functional user interface but fail to implement proper input sanitization, or it might create a complex microservice that lacks necessary error handling. These gaps represent a significant threat to organizational security, as the “null check” nightmare becomes a common occurrence in codebases bloated by unverified AI suggestions. There is a growing gap between the creative intent of the AI—which is often quite impressive—and the rigid performance requirements of modern hardware and cloud infrastructures.

Lessons From the 1970s: The Multi-Pass Evolution

History offers a compelling solution to this modern dilemma through the evolution of compiler design in the 1970s. Early compilers were single-pass systems that attempted to read source code and emit machine code simultaneously, much like current AI tools. These early systems were incredibly fragile and struggled to handle the complexity of emerging high-level languages. If the compiler encountered an error late in the file, it often had to restart or could not properly optimize the code it had already processed. This fragility forced computer scientists to rethink the very nature of translation, leading to the development of the multi-pass compiler.

The historic pivot to the multi-pass architecture introduced the concept of the Intermediate Representation (IR), a bridge between the human-readable source and the machine-executable binary. By breaking the compilation process into distinct phases—parsing, optimization, and generation—engineers created a “buffer” that allowed for much greater complexity and reliability. This separation of concerns became the gold standard for foundational languages like C++ and Java. It allowed the compiler to validate the logic and structure of the code in an abstract form before committing to a specific machine implementation. Today, this 50-year-old lesson is providing the blueprint for fixing the unpredictability of AI-generated software.

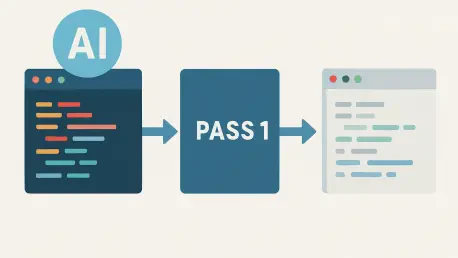

A New Framework: The Two-Pass AI Architecture

The emergence of a Two-Pass AI Architecture marks a significant step toward stabilizing automated development. In the first pass, the LLM is treated as a high-level reasoning engine rather than a raw code writer. Its job is to decompose complex business requirements into an Intermediate Representation—a structured, meta-language output that defines the intent of the software without getting bogged down in framework-specific syntax. By limiting the “stochastic surface area” in this way, the system prevents the model from hallucinating malformed tags or incorrect API calls. The AI focuses on the “what” and the “why,” leaving the “how” to a more disciplined process.

The second pass of this architecture returns the process to the world of deterministic engineering. A specialized generator, governed by rigid rules rather than statistical probabilities, takes the validated IR and translates it into production-ready code, such as React, Angular, or Swift. This pass does not rely on AI; instead, it uses traditional templates and logic to ensure the output is 100% auditable and reproducible. Because the generator follows standardized security patterns and organizational best practices every time, the resulting code is consistently high-quality. This ensures that the final deployment is not a guess, but a calculated execution of a verified design specification.

Bridging Creative Reasoning and Machine Precision

The industry is beginning to shift its focus away from simply building “smarter” models toward developing smarter architectures that surround those models. Expert insights suggest that the most effective way to utilize AI is through a “Gatekeeper” effect, where the system catches hallucinated tokens or structural flaws before they ever reach the final codebase. By placing constraints on what the AI is allowed to produce, organizations can harness the creative power of these models without sacrificing the integrity of their software. This structural approach creates a protective layer that ensures the logic remains sound while the AI handles the cognitive load of interpreting requirements.

This shift represents the true philosophy of “Augmented Engineering,” where humans remain in control of the underlying logic while machines manage the tedious details of syntax and framework integration. Case studies have shown that when structural constraints are applied to AI workflows, the resulting systems exhibit higher security and significantly better performance. The AI acts as a collaborator that drafts the blueprints, while the deterministic pass acts as the master builder that follows those blueprints to the letter. This synergy allows for a level of scale and complexity that neither a human developer nor a standalone AI could achieve with the same level of reliability.

Implementing the Two-Pass Strategy in Modern Workflows

To successfully implement a two-pass strategy, organizations must first define a robust meta-language that serves as their Intermediate Representation. This IR needs to be expressive enough to capture the nuances of the design specifications but rigid enough to be easily validated by a deterministic generator. Creating this bridge requires a deep understanding of the target platforms, ensuring that the transition from stochastic reasoning to machine-ready code is seamless. Developers must focus on refining these design specifications, treating the meta-language as the primary source of truth for the application’s structure.

Scaling this architecture makes AI-generated code a viable reality for high-stakes enterprise environments where failure is not an option. By following best practices for validation and testing at the IR level, teams can maintain underlying stability even as the underlying AI models evolve. This approach allowed engineering leaders to reclaim the predictability that was lost during the initial AI gold rush. The path forward involved a commitment to the discipline of the past, ensuring that the software of the future was built on a foundation of verified intent. Engineers realized that by separating the act of thinking from the act of writing, they could finally trust the machines to help build the world.