The landscape of open-source security has undergone a seismic shift as artificial intelligence transitions from a generator of hallucinatory noise to a source of sophisticated, verifiable technical intelligence. For several years, project maintainers operated in a state of constant frustration, frequently inundated by what many colloquially termed “AI slop,” which consisted of low-quality, illogical bug reports that lacked any grounded reasoning or actionable data. These automated submissions often fabricated vulnerabilities in non-existent code paths, forcing human developers to waste hundreds of collective hours debunking false positives and explaining basic logic to bot-driven accounts. The friction reached a breaking point when high-profile initiatives, including the cURL project, were forced to suspend their bug bounty programs because the sheer volume of junk reports completely overwhelmed their ability to process genuine security threats. However, this era of chaos has recently ended, replaced by a new reality where AI-driven reports rival the precision of elite human researchers.

The Abrupt Leap: A Synchronized Evolution in Logic

One of the most startling aspects of this technological mutation is the synchronized nature of the improvement across the entire open-source ecosystem, occurring almost simultaneously across disparate projects. Maintainers of the Linux kernel and other critical infrastructure components reported a sharp, unexplained spike in the quality of incoming reports within a very narrow timeframe, suggesting a global shift in how these models process code. This evolution was not a gradual incline but rather a sudden leap from producing incoherent gibberish to delivering highly structured, evidence-based security analyses that include reproducible test cases. Experts are currently debating the exact catalyst for this change, with some pointing toward a massive, silent update in the underlying architecture of major large language models. Others argue that security-focused teams have successfully refined the specific training data and prompting strategies used for vulnerability discovery, finally bridging the gap between general reasoning and specialized software engineering proficiency.

Regardless of the specific trigger, the consensus among the developer community is that the baseline for automated code analysis has moved permanently, rendering old skepticism toward AI-generated content largely obsolete. These tools are now exhibiting a profound understanding of complex code paths and structural integrity, moving beyond simple pattern matching to genuine logic verification. Instead of merely flagging potential issues with vague descriptions, modern AI tools provide clear, step-by-step reasoning that allows maintainers to verify vulnerabilities in a fraction of the time it previously took. This shift marks a definitive end to the era of hallucinated bugs, establishing artificial intelligence as a legitimate partner in the ongoing battle to secure global digital infrastructure. The transition has forced a reevaluation of how maintainers interact with automated submissions, as the data being provided is now too valuable to ignore, necessitating a fundamental change in the standard operating procedures for code review across major platforms.

Proactive Remediation: Moving from Detection to Automated Resolution

The utility of artificial intelligence has rapidly expanded beyond the simple identification of flaws into the more complex realm of proactive remediation and patch generation. Within the Linux kernel development community, for instance, these tools are proving to be exceptionally efficient at addressing long-standing logic errors, particularly those hidden within intricate error-handling routines. Recent experiments conducted by lead maintainers demonstrated that when prompted to analyze specific legacy subsystems, an AI could not only identify dozens of legitimate security risks but also generate functional patches for those issues simultaneously. A significant majority of these proposed fixes were found to be either directly usable in the production branch or required only minor syntactical adjustments from human supervisors to meet strict documentation standards. This capability transforms the AI from a mere reporting tool into an active participant in the development lifecycle, significantly reducing the manual labor traditionally required for initial code correction and hardening efforts.

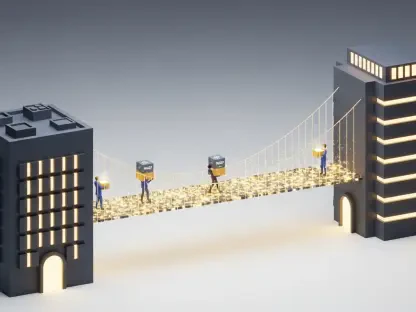

To manage the resulting influx of high-quality data, the open-source community has begun adopting a strategy known as “arming AI in reverse,” where maintainers deploy their own automated systems to filter and verify incoming patches. This defensive layer is essential for maintaining project velocity, as it allows for the near-instant screening of submissions before they ever reach a human reviewer’s desk. Notable implementations include Google’s Sashiko tool and specialized review pipelines developed at Meta, which provide developers with feedback on their C-based codebases in minutes rather than days. By turning artificial intelligence into a vital defensive filter, projects can now handle a much higher throughput of contributions while maintaining rigorous safety standards. This setup creates a dynamic where the AI serves as both the investigator and the first-line auditor, allowing human maintainers to focus their limited cognitive energy on high-level architectural decisions rather than the repetitive minutiae of initial code validation and syntax checking.

The Management Challenge: Balancing Throughput and Human Fatigue

While the surge in AI-generated contribution quality is undeniably a positive development for global software security, it has introduced a logistical challenge frequently referred to as the “input flood.” Because the barrier to entry for generating professional-sounding bug reports and functional patches has been significantly lowered, the total volume of incoming data has increased exponentially. Even though the reports are far more accurate than they were in previous years, every single submission still requires a final “sanity check” by a human maintainer to ensure it does not introduce subtle regressions or architectural inconsistencies. This requirement creates a paradox where the higher quality of the AI actually makes the human’s job more taxing, as distinguishing a “nearly correct” AI patch from a “perfect” human one requires more focus than debunking the obvious nonsense of the past. The mental load of processing hundreds of high-stakes, technically dense submissions per week is leading to a noticeable rise in maintainer fatigue across the community.

This operational burden is felt most acutely by small and medium-sized projects that lack the massive organizational infrastructure and dedicated security teams found in larger ecosystems like the Linux kernel. To address this disparity, organizations such as the Open Source Software Foundation are prioritizing the delivery of standardized tools and cloud-based review infrastructure to help smaller teams manage the automated surge. The strategy involves creating a sustainable “AI vs. AI” model where the success of a project is no longer determined by the sheer number of human hours available, but by how effectively those humans can leverage automated systems to keep pace with the speed of AI-driven discovery. This approach ensures that the benefits of the intelligence leap are distributed across the entire software supply chain rather than being concentrated only in well-funded projects. Moving forward, the industry must focus on refining these collaborative workflows to ensure that the human element remains a focused oversight authority rather than a bottleneck in the security pipeline.

The transition from digital slop to actionable intelligence reshaped the fundamental nature of open-source maintenance and security protocols. Projects that successfully integrated automated review layers saw a dramatic increase in their ability to resolve long-standing vulnerabilities that had previously gone unnoticed for years. The move toward an “AI vs. AI” defensive model provided a necessary buffer against the sheer volume of high-quality submissions, ensuring that smaller teams were not buried by the rapid pace of discovery. Developers learned that the key to long-term sustainability was not to resist the influx of automated reports but to build sophisticated vetting pipelines that prioritized architectural integrity above all else. This period established a new standard for collaborative development where machine efficiency and human judgment finally functioned as a unified force. The most successful organizations were those that invested early in infrastructure that empowered their maintainers to act as final auditors rather than manual laborers. Ultimately, this shift proved that the real value of artificial intelligence lay in its ability to amplify human expertise rather than replace it.