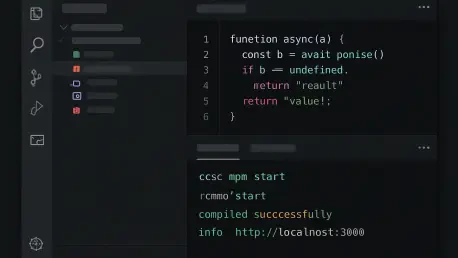

The rapid acceleration of artificial intelligence has forced the most stable software tools to abandon their traditional monthly schedules in favor of a blistering weekly iteration cycle that prioritizes immediate adaptation. Visual Studio Code 1.113 represents the peak of this transition, moving beyond its legacy as a lightweight text editor to become a sophisticated orchestration layer for autonomous agents. By integrating the Model Context Protocol (MCP) and deep Copilot synergies, Microsoft is attempting to solve the fragmentation that often plagues AI-assisted engineering.

The Shift to Weekly Iteration and Generative AI Integration

Transitioning to a weekly cadence allows the development team to shipping features as fast as AI research evolves. This agility is not merely about bug fixes; it is a strategic move to ensure that the editor remains the primary interface for the Copilot ecosystem. By focusing on the “active collaborator” model, the IDE now treats AI as a first-class citizen rather than a bolt-on extension, which distinguishes it from competitors that still rely on more static integration methods.

This version centers on transforming the developer experience from manual input to guided synthesis. By prioritizing the Model Context Protocol, the platform creates a standardized way for AI models to access local data, tools, and services. This move toward an open standard for context sharing is unique, as it prevents the “silo effect” where AI tools are blind to the specific environmental nuances of a developer’s workspace.

Enhanced Customization and Interoperability Features

The Preview Chat Customizations Editor

The introduction of the Chat Customizations Editor provides a centralized hub for managing the growing complexity of AI interactions. This interface allows developers to organize prompt files and custom instructions through a streamlined, tabbed system, effectively giving teams a way to “program” their AI’s behavior. The inclusion of an embedded editor with syntax highlighting ensures that these instructions are treated with the same level of rigor as actual source code.

This granular control matters because it addresses the generic nature of standard LLM responses. Instead of receiving broad suggestions, engineers can fine-tune the AI to respect internal architectural patterns or specific library constraints. This shift toward “hyper-local” AI logic makes the tool far more valuable in enterprise settings where compliance and specific coding standards are non-negotiable.

Expansion of the Model Context Protocol (MCP)

Version 1.113 significantly broadens the reach of MCP servers, enabling them to communicate with the Copilot CLI and external agents like Claude. By moving away from fragile file-parsing techniques toward an official API for session management, the update ensures that data exchange is both secure and persistent. This technical improvement means that an AI agent can maintain a coherent “memory” of a project even as a developer moves between the terminal and the editor.

Advancements in Autonomous Workflows and Reasoning Control

Modern development tasks often require multi-step logic that exceeds the capability of a single prompt. This release introduces the ability for subagents to invoke other subagents, facilitating complex automated workflows that can handle everything from refactoring to test generation. To prevent these processes from spiraling out of control, Microsoft implemented logic guardrails that manage recursive calls, ensuring that the automation remains productive rather than becoming a resource drain.

Furthermore, the new “Thinking Effort” submenu allows users to manually adjust the processing depth of high-reasoning models like Claude Sonnet or GPT variants. This feature is a direct response to the varying needs of a workday; a developer might want a “shallow” quick fix for a syntax error but require “deep” reasoning for a complex architectural migration. Providing this manual throttle gives the human user final say over the balance between speed and computational cost.

Real-World Applications and Interface Refinement

The practical application of these features is already visible in front-end development, where the new dedicated image viewer for chat attachments allows for instant UI analysis. Developers can now drop a mockup into the chat, and the AI can compare the visual intent against the existing CSS directly in the workspace. This integration reduces the cognitive load of switching between design tools and the code itself, creating a more fluid creative process.

Refined “Light” and “Dark” themes, alongside improved browser tab management, complement these high-tech additions. These aesthetic updates are not just for show; they are designed to minimize distractions as the workspace becomes increasingly crowded with AI sidebars and chat windows. The result is a modern environment that manages to feel uncluttered despite the massive increase in underlying functionality.

Technical Hurdles and Regulatory Challenges

Despite the impressive feature set, the transition to a weekly release cycle introduces significant risks regarding software stability and user fatigue. Rapid updates can occasionally lead to regressions or “black box” logic errors where the AI’s autonomous decisions become difficult to audit. The industry is currently grappling with how to maintain oversight as subagents become more independent, creating a tension between the desire for total automation and the necessity of human accountability.

Additionally, the reliance on official APIs for session management highlights a broader industry shift toward more regulated data handling. While this improves security, it also creates a dependency on proprietary cloud services, which may be a hurdle for organizations with strict air-gapped requirements. Developers must remain vigilant, ensuring that the convenience of AI integration does not come at the cost of transparency or codebase security.

The Future of AI-Native Development Environments

The trajectory of this platform suggests a future where the editor is entirely context-aware, potentially predicting code changes before the developer even opens a file. We are likely to see even deeper bridges between local development environments and cloud-based reasoning engines, further blurring the line between the machine and the creator. This shift will eventually redefine the role of the software engineer, moving the profession toward high-level system architecture and agent management.

Comprehensive Assessment of Version 1.113

The release of version 1.113 successfully solidified the IDE’s position as the dominant force in the AI-assisted coding market. By embracing the MCP and providing granular control over model reasoning, the update addressed the primary frustrations of professional developers who require more than just a simple autocomplete tool. While the pace of change demanded a higher level of adaptability from its users, the trade-off resulted in a vastly more capable and intelligent environment. The integration of specialized customization editors and more robust session management provided a stable foundation for the next wave of autonomous engineering tools.