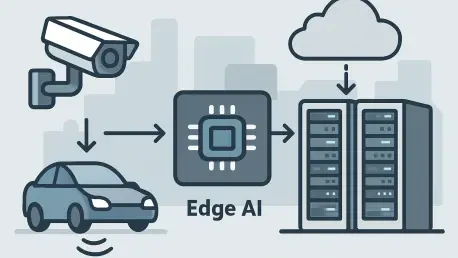

Imagine a world where a self-driving car can detect and react to a pedestrian in milliseconds, or where a remote surgical robot performs life-saving procedures without a hint of delay, all made possible by the power of edge computing in AI infrastructure. By bringing computational power closer to data sources, edge computing is revolutionizing how AI delivers speed, resilience, and tailored experiences across industries. The significance of this shift lies in its ability to meet modern demands for instantaneous decision-making and robust performance under ever-growing data loads.

This analysis dives into the ascent of edge computing as a cornerstone of AI infrastructure. It explores the trend’s momentum through market growth and real-world applications, incorporates expert insights on challenges and opportunities, and examines future implications for various sectors. Finally, actionable takeaways are provided for businesses aiming to harness this transformative technology.

The Rise of Edge Computing in AI Infrastructure

Growth and Adoption Trends

The adoption of edge computing for AI workloads is accelerating at an unprecedented pace. Recent industry reports project the edge AI market to grow significantly, with investments in distributed infrastructure rising sharply from this year through 2027. This surge reflects a broader shift from centralized cloud-based AI processing to distributed models that prioritize proximity to data sources, minimizing latency and optimizing performance.

Market studies underscore this momentum, highlighting how enterprises are increasingly allocating budgets toward edge solutions to support real-time AI inference. The transition is driven by the need for faster processing and the growing complexity of AI applications, which demand infrastructure capable of handling vast data streams at the point of origin. This trend is reshaping how businesses structure their digital ecosystems.

A key driver behind this growth is the recognition that edge computing enhances data privacy and reduces bandwidth costs compared to centralized systems. As more organizations embrace this approach, the landscape of AI infrastructure continues to evolve, setting new standards for efficiency and responsiveness across global markets.

Real-World Applications and Use Cases

Edge computing is making tangible impacts across diverse industries, enabling AI to operate closer to where data is generated. In logistics, companies leverage edge AI for real-time delivery tracking and route optimization, ensuring streamlined warehouse operations and cargo security. This proximity reduces delays and enhances operational efficiency on a massive scale.

In smart cities, edge computing powers traffic management systems through real-time video analytics and sensor data processing. It also aids in crime detection and emergency response by providing immediate insights without relying on distant cloud servers. Similarly, healthcare organizations utilize edge AI for continuous patient monitoring and remote robotic surgeries, ensuring rapid diagnostics and personalized treatments with minimal latency.

Notable examples include Nanyang Biologics, which uses distributed infrastructure for real-time collaboration in AI-driven drug discovery, achieving greater speed and accuracy at reduced costs. Additionally, Alembic, a marketing intelligence firm, optimizes customer insights by positioning AI infrastructure at the edge, enhancing performance through high-speed connectivity. These cases illustrate how edge computing is becoming integral to industry innovation.

Expert Perspectives on Edge AI Challenges and Opportunities

Industry leaders emphasize the transformative potential of edge computing in AI, particularly its ability to slash latency and bolster data privacy. By processing data closer to its source, businesses can deliver near-instantaneous results while minimizing the risks associated with transmitting sensitive information to centralized servers. This is seen as a critical advantage in sectors requiring strict confidentiality.

However, challenges persist in adopting edge AI infrastructure. Experts point to complex cost structures, balancing operational expenses (OPEX) against capital expenditures (CAPEX), as a significant hurdle. Additionally, ensuring compliance with data protection laws across over 140 countries demands meticulous planning and localized infrastructure to meet varying regulatory requirements, adding layers of complexity to deployment strategies.

Another concern is the need for scalable, interconnected systems to support edge AI workloads. Thought leaders stress that without robust infrastructure, businesses risk bottlenecks that could undermine performance gains. Overcoming these obstacles requires strategic investments in distributed networks and partnerships with providers capable of delivering seamless connectivity and compliance solutions.

Future Implications of Edge Computing in AI

Looking ahead, edge computing in AI is poised for remarkable evolution, particularly with the development of smaller, specialized models tailored for domain-specific applications. These compact models enable training directly at the edge, offering cost efficiency and superior performance for industries with unique data needs. This shift promises to refine how AI integrates into everyday operations.

Yet, managing the sheer volume of data generated at the edge remains a daunting task. Smart factories, for instance, can produce up to 1 petabyte of data daily, while autonomous vehicles and airplanes generate terabytes. Handling such loads without compromising speed or incurring prohibitive costs necessitates innovative approaches to data storage and processing, alongside navigating intricate regulatory landscapes.

The broader implications of edge AI span multiple sectors. In financial services, real-time fraud detection could become more precise; in media, content creation could achieve unprecedented personalization; and in autonomous vehicles, navigation could reach new levels of safety through instantaneous decision-making. As edge computing matures, its potential to redefine industry standards grows, paving the way for a more connected and responsive future.

Conclusion and Call to Action

Reflecting on the journey of edge computing in AI infrastructure, its strategic importance becomes evident through widespread adoption, impactful real-world applications, and the promise of future innovation. The technology has proven instrumental in meeting modern performance demands by prioritizing proximity and distributed processing. Its influence has reshaped industries, from logistics to healthcare, setting a benchmark for operational excellence.

Businesses are encouraged to take proactive steps by auditing their current AI setups and identifying latency bottlenecks. Defining edge requirements and designing flexible, multicloud strategies emerge as critical actions to balance workloads effectively. Leveraging global data center ecosystems and interconnected partner networks offers a pathway to future-proof AI strategies, ensuring resilience and scalability in an ever-evolving digital landscape.