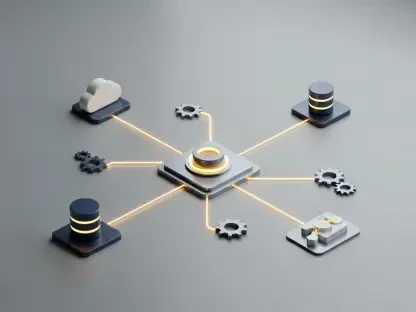

Modern enterprises frequently struggle with the logistical nightmare of migrating massive datasets into external processing environments just to unlock the latent potential of generative artificial intelligence models. Oracle has fundamentally altered the trajectory of enterprise software by launching a suite of tools designed to embed agentic artificial intelligence directly into the database infrastructure. This strategic pivot addresses a persistent bottleneck in digital transformation: the friction between static data storage and dynamic algorithmic execution. By integrating AI capabilities within the existing data layer, organizations can now build autonomous agents that interact with real-time information without the risks or costs associated with data movement. This move signals a transition away from monolithic AI projects toward nimble, task-oriented agents that can manage specific business processes autonomously. The focus is no longer just on large language models but on how these models can be empowered to take action within a secure environment. This evolution is particularly crucial for industries where data latency and security are paramount, ensuring that AI remains an asset rather than a liability.

Democratizing Advanced Artificial Intelligence: Integrated Systems

The cornerstone of this new ecosystem is the Autonomous AI Vector Database, which serves as a bridge for businesses of all sizes to access high-level analytics without requiring a massive capital outlay. By providing a free developer tier, Oracle has effectively lowered the financial barriers that previously sidelined smaller enterprises from participating in the AI revolution. This democratization is not merely about cost reduction; it is about providing the same sophisticated tools used by global corporations to agile, resource-constrained startups. These vector-powered applications allow for semantic search and complex pattern recognition that were once the exclusive domain of dedicated data science teams. Consequently, small business owners can now deploy intelligent recommendation engines or automated inventory management systems that adapt to market fluctuations in real time. This accessibility ensures that the technological gap between industry giants and emerging competitors continues to narrow, fostering a more competitive and innovative marketplace for digital services.

Complementing the database innovations is the introduction of the Private Agent Factory, a no-code solution that empowers business analysts to design complex AI workflows without writing a single line of code. This initiative recognizes that the most valuable insights often reside with domain experts who lack specialized programming knowledge. By providing a visual interface for constructing AI agents, Oracle allows these experts to translate business logic directly into automated actions while maintaining strict data sovereignty. This shift toward self-service AI development reduces the reliance on overstretched IT departments and accelerates the deployment cycle for new digital initiatives. These agents can be programmed to monitor supply chains, analyze customer sentiment, or even optimize energy consumption within industrial facilities. Furthermore, because these workflows operate within private service containers, sensitive corporate data never leaves the controlled environment. This creates a secure sandbox where innovation can flourish without exposing intellectual property to the risks associated with public cloud processing.

Strengthening Operational Foundations: Unified Data Architectures

A critical component of these advancements is the Unified Memory Core, which addresses the systemic inefficiency of fragmented, multi-database architectures that plague modern enterprise environments. In the past, businesses were forced to maintain separate systems for structured transactional data and unstructured vector data, creating significant operational noise and increasing the likelihood of synchronization errors. Oracle’s approach consolidates these diverse data types into a single operational foundation, allowing AI agents to maintain context across an entire enterprise dataset seamlessly. This architectural unity is essential for agentic AI, as it enables the system to pull historical sales figures and current market trends simultaneously to make informed decisions. By eliminating the need for complex data pipelines and extract-transform-load processes, the Unified Memory Core reduces the total cost of ownership for AI initiatives. Organizations can now achieve a more holistic view of their operations, leading to faster response times and more accurate predictive modeling that reflects the true state of the business in real time.

While the promise of streamlined operations is compelling, the integration of AI into core databases raises significant questions regarding information security and the potential exposure of sensitive assets. Oracle has countered these concerns by embedding Deep Data Security protocols directly into the database layer, ensuring that every interaction between an AI agent and the data is governed by existing access controls. This granular level of security prevents the unintended disclosure of confidential information, even when agents are processing complex queries or generating summarized reports for external stakeholders. Industry experts have noted that without such a unified security foundation, businesses risk implementing fragmented AI solutions that could inadvertently leak data or violate privacy regulations. The use of private service containers further isolates the AI processing tasks, providing an additional layer of protection against external threats. By prioritizing security as a foundational element rather than an afterthought, Oracle has created a framework where private AI can flourish while maintaining control.

Strategic Pathways: Successful Enterprise AI Integration

The deployment of these new tools necessitated a strategic shift in how organizations viewed their data infrastructure, moving away from passive storage toward active, agent-driven ecosystems. Leaders who successfully navigated this transition focused on mapping their internal processes to identify high-impact areas for automation before initiating full-scale deployment. They recognized that the learning curve for existing staff could be mitigated through the use of no-code interfaces, yet they still prioritized ongoing training to ensure that the workforce remained adept at supervising autonomous systems. Decision-makers evaluated their current data silos and sought to consolidate them into a unified memory architecture to maximize the efficiency of their agentic AI. This proactive stance allowed businesses to enhance operational transparency while simultaneously reducing the risks associated with data fragmentation. Ultimately, the move toward an integrated, secure AI environment proved that the most effective way to adopt artificial intelligence was through a foundation that prioritized data integrity.