The ability for a machine to decipher the nuance behind a human query was once a futuristic dream, yet today it is becoming a standard requirement for digital infrastructure. Instead of relying on rigid, word-for-word matches, modern systems are learning to interpret the spirit of a question. Traditional databases often fail when a user seeks “warm winter outerwear” but the record only contains “wool coats.” This gap between human thought and digital retrieval is closing as semantic search recognizes underlying context rather than just characters. Google Cloud’s integration of native vector support into Cloud SQL for MySQL marks a pivotal shift, allowing developers to build these intelligent experiences without leaving their familiar database environment.

The End of the Keyword ErSearch That Understands Intent

The traditional way data is queried is fundamentally changing, moving away from rigid keyword matching toward a system that understands human context. While a standard database query might fail to find relevant results if the exact phrase isn’t present, semantic search recognizes the intent and returns results for items that share conceptual meaning. This evolution allows for a more natural interaction with software, where the burden of precision shifts from the user to the underlying technology.

By integrating these capabilities directly into the database, organizations can provide a more intuitive user experience. Developers no longer need to map every possible synonym or variation of a search term manually. Instead, the system learns the relationships between words and concepts, ensuring that the most relevant information surfaces regardless of the specific terminology used by the searcher.

Bridging the Gap Between Relational Databases and Generative AI

For years, the rise of Generative AI forced a difficult architectural choice: maintain a reliable relational database or adopt a specialized vector database to handle complex AI workflows. This fragmentation often led to data silos, increased operational costs, and synchronization headaches between different storage layers. Managing two separate systems meant that updates to primary data were not immediately reflected in the search index, creating a lag that compromised the accuracy of AI-driven applications.

By bringing vector capabilities directly into Cloud SQL, Google Cloud addresses the core concern of modern data architects. They can now leverage n-dimensional numerical representations of data while maintaining the transactional integrity and crash safety that MySQL is known for. This unified approach simplifies the development lifecycle and ensures that the data used for semantic search is always as current as the operational database itself.

Technical Architecture of Native Vector Support in Cloud SQL

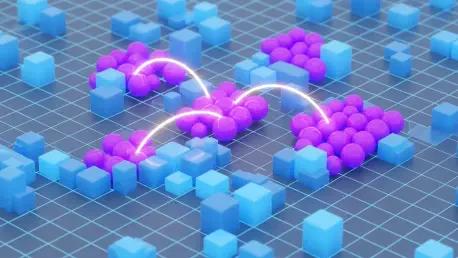

At the heart of this update is the treatment of vector embeddings as first-class database objects. These numerical representations are generated via Vertex AI, which transforms complex text or images into coordinates within a mathematical space. Because these vectors are stored as standard objects, they can be indexed and queried alongside traditional data types. This allows for a seamless blend of structured and unstructured information within a single query.

Developers can now utilize native SQL syntax to perform similarity searches, meaning they no longer have to learn exotic query languages to find data points that exist in close proximity. Using specific distance metrics, the database can determine exactly how relevant a piece of data is to a user’s natural language input. This integration relies on the power of proximity, calculating the mathematical “closeness” of vectors to deliver highly accurate results in milliseconds.

Practical Industry Applications for Semantic Search

Industry impact is already visible in retail, where shoppers discover products using natural descriptions rather than technical SKU numbers. This shift enhances product discovery by allowing users to describe what they need in plain language, which the system then matches to the best available options. In financial services, this technology sharpens fraud detection by spotting subtle patterns and anomalies that resemble known criminal behavior across millions of historical transactions.

Healthcare providers are also adopting these tools to match patient symptom profiles against vast medical databases to identify potential illnesses. This assists in providing faster, more accurate diagnostics based on historical cases that share similar characteristics. Furthermore, large organizations use semantic search to streamline internal knowledge management, searching for themes and concepts across massive document repositories rather than hunting for exact text strings.

Strategic Implementation and Design Considerations

Implementing these systems requires a careful transition from K-Nearest Neighbor to Approximate Nearest Neighbor search to keep latency low as datasets grow. Success depends on reaching a mandatory 1,000-row threshold before a vector index can be established, making early data collection a vital part of the planning process. Performance tuning becomes a balance of speed and accuracy, where the configuration must be adjusted to meet specific application requirements.

Architects also had to select their embedding models with extreme care, as these were not backward-compatible. If a developer switched to a newer model, they were required to recalculate embeddings for all existing data to ensure consistency. As data volumes expanded, dynamic index partitioning remained essential for maintaining efficiency. Looking ahead, teams considered how automated scaling and model monitoring would define the next generation of intelligent, database-driven applications.