The sheer magnitude of information generated every second across the globe has transformed the foundational architecture of corporate decision-making into a high-stakes arena where only the most adept data architects can survive the scrutiny of an interview. In the current landscape of 2026, the primary challenge for enterprises is no longer the mere acquisition of data but the sophisticated synthesis of that data into predictive intelligence. Modern corporations have moved beyond the basic storage paradigms of previous years, seeking instead to build ecosystems where artificial intelligence and machine learning models are fed by high-fidelity, real-time data streams. This shift requires a deep understanding of how raw information is refined through complex pipelines into strategic assets.

When candidates enter the interview room today, they are expected to define big data not just as “large data sets” but as an intricate tapestry of structured, semi-structured, and unstructured information. A professional response should highlight that big data includes everything from traditional database entries to server logs, social media interactions, and telemetry from billions of IoT devices. The benefit to a business is multifaceted; it involves personalizing customer experiences, optimizing supply chains, and identifying untapped market segments through predictive modeling. Interviewers look for individuals who can explain that the ultimate goal of big data analytics is to provide a competitive advantage by converting massive, heterogeneous datasets into actionable insights that drive revenue and operational efficiency.

The Shift Toward Intelligent Insights: Understanding the Modern Enterprise

The transition from simple data storage to complex AI-driven synthesis has fundamentally redefined what it means to be a modern enterprise. In 2026, organizations are focused on “intelligent insights,” which refers to the ability of a system to not only report what happened but to predict what will happen and prescribe the best course of action. This evolution has placed a significant burden on the underlying data frameworks. A common interview question involves discussing the specific challenges of a big data project, such as the difficulty of ensuring data quality or the immense cost of scaling infrastructure. Candidates must be prepared to discuss how managing these environments requires balancing the needs for security, high-speed processing, and cost-effective commodity hardware.

Furthermore, the rise of generative AI has made the veracity of data more important than ever. If the input data is flawed, the resulting AI outputs will be untrustworthy, leading to poor strategic decisions. Professionals in the field are now expected to be experts in data cleansing and feature selection, ensuring that only the most relevant variables are used in modeling. This prevents “noise” from distorting the results and reduces the computational overhead required to process the data. A sophisticated answer in a 2026 interview would connect the technical mechanics of data ingestion to the final business outcome, showing a holistic understanding of how intelligence is manufactured within the firm.

Why This Expertise Matters: Bridging the Gap Between Raw Data and Strategy

There is a growing surge in demand for specialists who can effectively bridge the gap between massive raw datasets and strategic corporate maneuvers. Organizations are no longer satisfied with engineers who only understand the “how” of technology; they want leaders who understand the “why.” When an interviewer asks about a candidate’s specific experiences in big data, they are looking for a narrative of problem-solving. It is essential to describe past roles by highlighting how technical decisions—like choosing between different Hadoop distributions or optimizing a MapReduce job—directly resulted in a measurable business improvement, such as reduced latency or increased accuracy in customer churn predictions.

This expertise is critical because the complexity of the data ecosystem has outpaced the available talent pool. Many organizations struggle with a lack of in-house skills, making the few professionals who can navigate these systems highly valuable. A candidate should be able to articulate the nuances of the “Five Vs” of big datVolume, Velocity, Variety, Veracity, and Value. While volume and velocity were the primary concerns in the past, the focus has shifted toward veracity and value. Interviewers often probe this by asking how one ensures that the data being collected is actually useful and accurate, rather than just taking up space in a data lake.

What This Guide Covers: Technical, Architectural, and Practical Knowledge

This guide provides a high-level overview of the technical, architectural, and practical knowledge required to excel in high-stakes big data interviews by examining the most pressing questions and technical scenarios. It covers the essential mechanics of the Hadoop ecosystem, the intricacies of real-time versus batch processing, and the operational commands necessary for maintaining cluster health. For instance, knowing the difference between the Standalone, Pseudo-distributed, and Fully distributed modes of Hadoop is a baseline requirement. Candidates should understand that while Standalone mode is for debugging, Fully distributed mode is the standard for production, where daemons are spread across thousands of nodes to ensure fault tolerance and high availability.

Beyond just theoretical definitions, this guide addresses practical troubleshooting and system optimization. Questions regarding the JPS command—used to check the status of Hadoop daemons like NameNode and DataNode—or the FSCK command for file system consistency checks, are common ways to test hands-on proficiency. The guide also delves into security protocols like Kerberos, which is the standard for protecting data from unauthorized access through secret-key cryptography. By mastering these diverse topics, a professional demonstrates that they are not just a theoretician but a practitioner capable of maintaining the integrity and performance of a modern data platform.

Master the Core Principles and Frameworks

Breaking Down the Architecture: From Ingestion to Actionable Intelligence

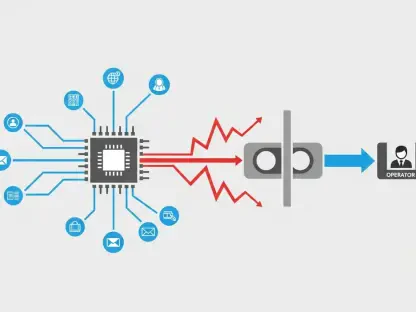

The modern data pipeline has evolved into a refined three-step lifecycle consisting of collection, distributed storage, and multi-framework processing. Data ingestion serves as the starting point, where information is gathered from various sources like server logs or IoT sensors. In 2026, the focus has shifted heavily toward real-time streaming to support instantaneous decision-making, moving away from the slower, traditional batch processing methods. An interview question might ask for the key steps in deploying a big data platform, and the response must emphasize the importance of a seamless flow from ingestion tools like Kafka or Flume into a robust storage layer like HDFS or a NoSQL database.

Once data is stored, it must be prepared for analysis through frameworks like Apache Spark or MapReduce. The friction between legacy systems and modern cloud-native big data platforms is a common point of discussion in professional circles. While legacy systems were often siloed and difficult to scale, modern platforms are designed for horizontal scalability. This means that as the volume of data grows, an organization can simply add more commodity hardware to the cluster. Candidates should be able to explain how this architectural shift allows for more flexible data processing and how it supports the integration of diverse data types without the need for rigid, predefined schemas.

The final stage of the architecture is the transformation of processed data into actionable intelligence. This involves using machine learning and predictive analytics to find patterns that would be invisible to the human eye. In an interview, it is helpful to discuss how the architecture must be designed with “data locality” in mind—moving the computation to the data rather than moving the data to the computation. This reduces network congestion and significantly speeds up the processing time. By explaining the architecture as a cohesive system designed for speed and reliability, a candidate proves they can design solutions that meet the high-performance demands of today’s business environment.

The Hadoop Ecosystem: Why Distributed Computing Still Rules the Enterprise

Hadoop remains a cornerstone of the enterprise because of its unparalleled ability to handle massive, diverse datasets using distributed computing. The ecosystem is built on the “Big Four” modules: Hadoop Common, HDFS, YARN, and MapReduce. Hadoop Common provides the necessary utilities, while HDFS acts as the primary storage system. YARN serves as the resource management layer, scheduling jobs and allocating resources across the cluster. MapReduce is the framework used for parallel processing. Even in a hybrid-cloud world, these modules provide a level of stability and scalability that newer technologies often struggle to match, especially when dealing with petabytes of legacy data.

Real-world applications of this ecosystem are everywhere, such as in global logistics firms that utilize HDFS to gain high-throughput access to telemetry data from millions of delivery vehicles. This allows them to optimize routes in real-time and predict maintenance needs before a breakdown occurs. However, the complexity of managing a Hadoop cluster is a significant risk. Vendors like Cloudera or AWS EMR have stepped in to mitigate this overhead by offering managed services that simplify deployment and scaling. In an interview, discussing the pros and cons of these different distributions shows that a candidate is aware of the operational realities of maintaining such a complex environment.

The continued relevance of MapReduce, despite the rise of Spark, is also a frequent topic. MapReduce is often preferred for its robustness in handling extremely large batch jobs where processing time is less critical than the absolute reliability of the result. It breaks tasks into two main phases: the Map phase, which filters and sorts the data, and the Reduce phase, which aggregates the results. A candidate should be able to explain how MapReduce provides its own level of fault tolerance, making it an essential tool in any big data engineer’s arsenal. Understanding how these components interact within the YARN framework is key to demonstrating mastery over the distributed computing paradigm.

Beyond the Five Vs: New Dimensions of Data Quality and Variability

The conversation around big data has moved past the traditional volume and velocity to explore the critical importance of veracity and value. Veracity refers to the truthfulness and accuracy of the data, which is paramount when building high-precision machine learning models. If a model is trained on biased or inaccurate data, the resulting predictions will be fundamentally flawed. Value, on the other hand, asks whether the insights gathered are worth the cost of the storage and processing. In 2026, professionals are increasingly challenging the assumption that “more data is better,” focusing instead on the actual utility of the information to avoid the “data swamp” phenomenon.

Furthermore, new dimensions like “variability” and “volatility” have emerged as key factors in managing complex datasets. Variability refers to the changing nature of data formats and meanings over time, such as how sensor data might fluctuate based on seasonal patterns or environmental conditions. Volatility refers to how long data remains relevant and needs to be stored. Managing these factors requires sophisticated data lifecycle management strategies. A candidate might be asked how they handle outliers—data points that are abnormally distant from the rest. The answer should involve using techniques like extreme value analysis or probabilistic models to determine if an outlier is a significant signal or just noise.

Feature selection also plays a vital role in ensuring data quality. By using filter, wrapper, or embedded methods, data scientists can extract the most relevant information from a dataset, reducing the computational load and improving model accuracy. In an interview, explaining the trade-offs between these methods—such as the high computational cost of wrapper methods versus the speed of filter methods—demonstrates a deep understanding of the data preparation process. Ultimately, the goal is to create a high-fidelity dataset that provides clear, actionable insights without wasting valuable corporate resources on irrelevant information.

Operational Excellence: Performance Tuning and Cluster Resilience

Achieving operational excellence in a big data environment requires a deep understanding of performance tuning and cluster resilience. This starts with choosing the right operational mode for the task at hand. While Standalone and Pseudo-distributed modes are excellent for initial development and debugging, a Fully distributed mode is necessary for production to ensure that data is replicated across multiple nodes. This replication is what makes HDFS fault-tolerant; if one DataNode fails, the NameNode can direct the application to a replicated copy on another node, preventing data loss and ensuring continuous operation.

Rack awareness is another critical defensive strategy against hardware degradation and network congestion. By distributing block replicas across different physical racks, the NameNode ensures that even if a whole rack fails due to a power outage or switch failure, the data remains accessible. This strategy also optimizes performance by preferring to read data from a node on the same rack, reducing cross-rack network traffic. In an interview, explaining how rack awareness contributes to both reliability and speed shows a candidate’s ability to think about the physical infrastructure supporting the logical data structures.

Speculative execution is a further optimization technique that helps maintain cluster performance. If Hadoop detects that a particular task is running much slower than others—perhaps due to a failing hard drive or a slow network link—it will launch a duplicate “speculative” task on another node. Whichever task finishes first is kept, and the other is killed. This prevents a single “straggler” node from delaying an entire job. Experts in 2026 are also looking toward the future of “self-healing” clusters that can automate the identification and resolution of such issues using diagnostic tools and AI-driven monitoring. Demonstrating knowledge of these proactive strategies is a hallmark of a senior-level candidate.

Strategies for Technical Success and Career Growth

The Modern Professional’s Toolkit: Impactful Takeaways

The modern professional’s toolkit must extend beyond basic coding to include a deep mastery of security, resource management, and troubleshooting. Mastering the Kerberos security protocol is non-negotiable in an era where data breaches can cost millions. Candidates should be able to explain the three-step process of authentication, authorization, and service requests that Kerberos uses to ensure that only verified users can access sensitive datasets. Additionally, understanding the nuances of feature selection and data normalization is essential for anyone working with modern AI models, as these steps directly impact the efficiency and accuracy of the resulting algorithms.

Another impactful takeaway for any candidate is the importance of understanding the hardware-software interface. This includes knowing the benefits of commodity hardware—its low cost and ease of replacement—and how HDFS is designed specifically to run on such systems. A professional should be comfortable with the command line, using tools like the JPS command to verify that all necessary daemons, such as the ResourceManager and NodeManager, are functioning correctly. By combining these technical skills with a broad understanding of the business goals, a specialist becomes an indispensable asset to their organization.

Finally, the toolkit should include a strong grasp of the various configuration files used in the Hadoop ecosystem, such as yarn-site.xml and hdfs-site.xml. Knowing how to tune these files to optimize memory allocation or block sizes can make the difference between a sluggish cluster and a high-performance engine. In an interview, being able to pinpoint which file to edit for a specific performance issue demonstrates a level of hands-on experience that theoretical knowledge alone cannot provide. These practical insights are what separate the top-tier candidates from those who have only studied the concepts in a classroom.

Best Practices: Navigating AI-Filtered Recruitment and Demonstrating Intuition

In 2026, the recruitment process itself is often governed by AI, making it essential to tailor resumes with the specific keywords and technical accomplishments that these algorithms look for. However, once a candidate reaches the human interview stage, the focus shifts toward demonstrating “data intuition.” This is the ability to look at a complex problem and instinctively know which frameworks or techniques will be most effective. It involves moving past textbook answers to provide nuanced perspectives on real-world challenges, such as how to handle data drift in machine learning models or how to manage the “volatility” of streaming data.

Actionable advice for 2026 includes focusing on soft skills like communication and problem-solving alongside technical proficiency. A candidate must be able to translate “data-speak” into business value, explaining to non-technical stakeholders how a change in the data pipeline will improve the company’s bottom line. During an interview, this can be demonstrated by asking thoughtful questions about the company’s data culture or its long-term strategy for AI integration. Showing that you are a “whole-brain” thinker who can bridge the gap between the server room and the boardroom is a massive competitive advantage.

Furthermore, maintaining a commitment to continuous learning is a best practice that cannot be ignored. The field is moving so fast that certifications and skills from a few years ago may already be outdated. Mentioning current studies in emerging areas like “green data” or ethical AI handling can signal to an interviewer that you are a forward-thinking professional who is prepared for the challenges of tomorrow. This proactive approach to career growth shows a level of passion and dedication that is highly attractive to employers looking for long-term leaders in their data departments.

Practical Application: Demonstrating Troubleshooting Proficiency During the Interview

Troubleshooting proficiency is often tested in interviews through scenario-based questions that require a candidate to diagnose a problem in real-time. For example, an interviewer might ask what to do if a Hadoop job is stuck in a “pending” state. A knowledgeable response would involve checking the YARN ResourceManager to see if there are sufficient resources available and then using the JPS command to ensure all necessary daemons are running. This demonstrates that the candidate has a logical, step-by-step approach to problem-solving and is familiar with the actual tools used in the field.

Another common practical scenario involves dealing with a NameNode failure. A candidate should be able to explain the role of the Secondary NameNode or a High Availability (HA) setup where a standby NameNode can take over immediately. Discussing the importance of transaction logs and fsimage files in this process shows a deep understanding of HDFS internals. This level of detail is what interviewers are looking for when they want to verify that a person has actual production experience rather than just a surface-level understanding of the technology.

Being able to navigate configuration files is another way to show practical skill. If an interviewer asks how to change the replication factor for a specific file, a candidate should know that this can be done via the command line or by adjusting the hdfs-site.xml file. Similarly, knowing how to use the FSCK command to find corrupt blocks and understand the status of the file system is a vital skill for any cluster administrator. By grounding theoretical answers in these practical applications, a candidate provides tangible proof of their expertise and their ability to handle the daily operational challenges of a big data environment.

The Future Landscape of Big Data Roles

The Evolving Relationship Between Big Data Engineers and Generative AI

The relationship between big data engineers and generative AI is evolving into a symbiotic partnership where one cannot succeed without the other. In 2026, engineers are increasingly using AI to automate the more mundane aspects of their jobs, such as writing boilerplate code for data pipelines or monitoring cluster health. This allows them to focus on more complex tasks like architectural design and ethical data handling. At the same time, generative AI models are entirely dependent on the high-quality, large-scale datasets that big data engineers provide. Without a robust data infrastructure, even the most advanced AI would be useless.

This shift is also changing the nature of the interview questions themselves. Candidates are now being asked how they would integrate AI into their workflows to improve efficiency and how they ensure the data they provide to these models is free from bias. There is a growing emphasis on “data orchestration,” where the goal is to manage the complex flow of information through multiple AI and ML frameworks. Understanding how to use tools that automate these processes is becoming a core requirement for anyone looking to advance in the field.

Moreover, the rise of AI has led to a greater focus on “explainability.” It is no longer enough for a model to give a result; the organization needs to know why it gave that result. This requires big data engineers to build systems that track the “lineage” of data, showing exactly where a piece of information came from and how it was transformed before it reached the AI. Demonstrating an understanding of this need for transparency is a key way for a candidate to show they are prepared for the more rigorous demands of the modern enterprise.

Emphasizing Green Data Practices and Ethical Data Handling

Ethical data handling and “green data” practices have become permanent fixtures in the 2026 job market as organizations face increasing pressure to be socially and environmentally responsible. Green data refers to the practice of optimizing data storage and processing to minimize energy consumption and reduce the carbon footprint of data centers. This can involve anything from choosing more energy-efficient hardware to designing algorithms that require fewer computational cycles. In an interview, discussing how you have optimized a process to be more resource-efficient can be a powerful way to show you are aligned with modern corporate values.

Ethical data handling, meanwhile, involves more than just following privacy laws like GDPR or CCPA. It includes a proactive commitment to data sovereignty, ensuring that people have control over their own information and that data is not used in ways that are harmful or discriminatory. Candidates should be prepared to discuss how they implement data masking or anonymization to protect personally identifiable information. They should also be able to talk about the ethical implications of the projects they work on, showing that they consider the human impact of their technical decisions.

These considerations are no longer just “nice-to-have” skills; they are often legal and operational requirements. Organizations are looking for professionals who can navigate the complex regulatory landscape and help the company maintain its reputation as a responsible steward of data. By highlighting your experience with these practices, you demonstrate a level of maturity and professional responsibility that goes beyond simple technical skill. This holistic approach to data management is what will define the leading professionals in the field for years to come.

Translating Data-Speak Into Business Value: The Ultimate Competitive Advantage

The ultimate competitive advantage for any candidate in 2026 remains the ability to translate complex “data-speak” into clear business value. While the technical details of YARN scheduling or HDFS replication are important, they are ultimately just means to an end. The end goal is to help the company make better decisions, grow its revenue, and serve its customers more effectively. A professional who can explain how a new data architecture will reduce the time it takes to launch a new product or how a more accurate predictive model will save the company millions in lost customers is far more valuable than one who can only talk about code.

In the final synthesis of an interview, it is this ability to connect the dots between technology and strategy that leaves the most lasting impression. It shows that you understand the big picture and that you are committed to the success of the entire organization, not just your specific department. This requires a broad understanding of the industry you are working in and a keen interest in the challenges the business is facing. By positioning yourself as a strategic partner rather than just a technical resource, you become a much more compelling candidate.

As the interview concludes, the focus should shift toward the actionable next steps that can be taken to drive the organization forward. This might involve discussing how to implement a new data governance framework or how to better integrate real-time analytics into the existing decision-making process. The candidate who can provide these types of forward-thinking insights proved they were ready to hit the ground running. Ultimately, the successful big data professional of 2026 was one who combined deep technical mastery with a sharp business mind and a strong ethical compass, ensuring that they were not just prepared for the job, but were ready to lead the way into the future.